SCORM 2004 vs 1.2: What’s Different and Why It Matters

Trying to choose between SCORM 1.2 and SCORM 2004? It’s a common roadblock, and getting it wrong can lead to broken tracking, confused learners, or courses that don’t work with your LMS.

SCORM was created as a reference model, not a rigid rulebook. It combined the best ideas from earlier standards like AICC and IMS, aiming to simplify how content is delivered and tracked across platforms.

SCORM 1.2 is known for its simplicity and broad support. SCORM 2004 offers more advanced features, like sequencing, detailed reporting, and flexible completion tracking. Sounds great, but it’s not always the best choice.

The catch? Not every LMS handles both standards equally. Some only support SCORM 1.2. Others claim to support 2004 but skip the trickier parts. And if you’re building interactive or multi-module content, the version you choose directly affects what’s possible — and what breaks.

This guide breaks down SCORM 1.2 vs 2004 — from tracking features and data limits to real-world compatibility, so you can pick the version that fits your project (and your LMS).

Key Takeaways

The article compares SCORM 1.2 and SCORM 2004, explaining that SCORM 1.2 is widely supported and simple, while SCORM 2004 adds advanced features like separate completion and success statuses, sequencing, richer reporting, and larger data limits. It notes that compatibility varies by LMS, with many still preferring SCORM 1.2. The guide also discusses the pros and cons of each version, how to choose based on course needs and platform support, and mentions newer standards like xAPI that complement SCORM. Using the right authoring tool simplifies exporting and tracking in either format.

Key Technical Differences: SCORM 2004 vs 1.2

Not all SCORM versions are created equal, and picking the wrong one can cause real headaches. Think glitchy tracking, confused learners, or content that just won’t work with your LMS. Knowing the key differences helps you choose smarter, avoid rework, and build training courses that do what you need them to.

It matters not just to developers but to instructional designers, content authors, and anyone building real-world courses. Understanding what each version supports — from web content to sequencing rules — helps avoid rework and deliver smoother learning activities.

But before we dive into the specs, it’s worth noting that while SCORM 2004 was designed to solve many of 1.2’s limitations, adoption hasn’t been universal. Many LMSs still prefer the simpler 1.2, while others embrace the full power of 2004 or even mix it with newer standards like xAPI.

A frequently asked question is: if SCORM 1.2 still works, why bother with newer options or mix them at all?

Expert insight:

“Many organizations are adopting a hybrid approach, utilizing both SCORM and xAPI. SCORM remains a reliable standard for traditional eLearning courses with basic tracking needs. A full transition to xAPI is still relatively uncommon, as it requires additional infrastructure, such as a Learning Record Store (LRS).”

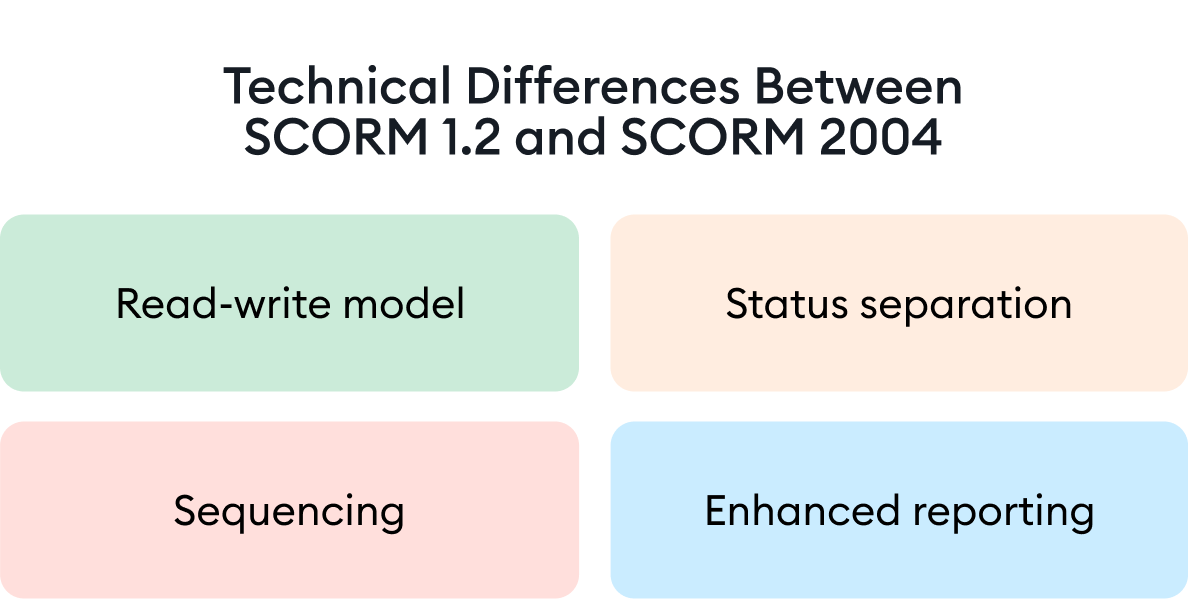

Read-write model

SCORM 2004 introduced a read-write model. Now courses could peek at earlier learner inputs, making reports richer and smarter. SCORM 1.2? Write-only. It tracks progress but doesn’t look back. For example, a SCORM package using 2004 can retrieve earlier learner answers, adjust the path, and improve tracking — something that SCORM 1.2 cannot do.

Status separation

SCORM 1.2 had just one status bucket (Lesson_Status). SCORM 2004 split it into completion_status (completed/incomplete) and success_status (passed/failed). So now you can tell if someone finished a course but flunked the quiz. That extra detail is helpful in computer-based training or compliance scenarios, where knowing who passed a course — and who simply finished it — really matters.

Sequencing

Unique to SCORM 2004, sequencing lets you set rules on how learners move through content — think custom learning paths and the option to pause and resume.

Enhanced reporting

With its smarter status tracking and interaction details, SCORM 2004 lets you perceive patterns. For example, it shows who is completing compliance training but still struggling with assessments. This matters even more for eLearning programs that need to scale and show results, especially when they’re integrated with an LMS.

SCORM 1.2 vs SCORM 2004: A Brief Comparison

| Feature | SCORM 1.2 | SCORM 2004 |

|---|---|---|

| Separate completion/success statuses | No | Yes |

| Suspend data limit (characters) | 4,096 | 64,000 (3rd ed. and up) |

| Interaction descriptions | No | Yes |

| Multiple SCOs per package | No | Yes |

| Sequencing and partial scoring | No | Yes |

| LMS and tool support | 90%+ | Under 50% |

| Installation complexity | Lower | Higher |

| Supports multiple SCOs | No | Yes |

| Stores metadata | Limited | Yes |

Compatibility and popularity

According to Rustici Software’s 2024 report, SCORM is still the most-used eLearning standard out there, with millions of courses being launched every month. Most of that’s thanks to SCORM 1.2, but even when you count all versions of SCORM 2004, together they power the bulk of what’s happening in LMSs today. Why? Because SCORM is reliable and works with pretty much everything — and that still matters.

And it’s not slowing down: for example, in 2021 uploads to SCORM Cloud jumped by nearly 46% in just one year, and learner registrations grew by 31%.

Too much to read? Get a summary from AI

Pros and cons

SCORM 1.2: advantages and limitations

Pros

- It’s one of the most supported formats on the market.

- SCORM 1.2 is simple to set up and run.

- It’s proven and reliable.

Cons

- It only tracks one status at a time.

- The small data cap (4,096 characters) limits bigger courses.

- Write-only tracking makes reporting shallow.

- It can’t show quiz questions — only answers.

- It doesn’t support reusable SCOs, which limits modular design.

SCORM 2004: advantages and limitations

Pros

- It tracks completion and success separately.

- Its big data limit (64,000 characters) is perfect for longer courses.

- SCORM 2004 lets you create sequenced learning paths.

- It offers richer reporting with question text and analytics.

Cons

- It’s not as widely supported.

- The sequencing spec is complex and often skipped by vendors.

So, while SCORM 1.2 remains the safer choice in many cases, understanding the strengths of both versions helps clarify the bigger picture in the SCORM 1.2 vs SCORM 2004 debate.

How to Choose Between SCORM 1.2 and 2004

Whether you’re comparing SCORM 2004 and SCORM 1.2 for a new LMS rollout or revisiting legacy courses, the key is to match the standard to your course structure and tracking needs.

Go with SCORM 1.2 if:

- Your tools don’t fully support 2004.

- You’re building bite-sized or simple courses.

- You want broad compatibility and fewer tech hassles.

Pick SCORM 2004 if:

- You want sequencing, structured paths, or partial scoring.

- Your course is long, and learners need to pause and resume.

- Your platform supports 2004, and you’ve got coding resources.

Always check compatibility before choosing, especially if mixing tools and LMS platforms.

Where SCORM Stands Today: xAPI and cmi5 Context

Newer standards like Experience API (xAPI) were built as the next generation of learning tech: browser-free, LMS-optional, and open to everything from mobile apps to VR headsets. Both xAPI and cmi5 can track much more than SCORM ever could — offline learning, mobile activity, and even social and real-world experiences. xAPI enables deep, flexible reporting, better feedback collection, and faster content improvements — all without relying on a constant LMS connection.

But here’s the key point: xAPI doesn’t replace SCORM; it complements it.

SCORM packages are still widely used, especially in traditional LMS setups. They’re effective for tracking whether a user starts or completes a course and managing access to different course sections. SCORM also ensures broad compatibility with existing platforms.

Still, SCORM has its limits. It primarily functions within the LMS and struggles to track learning that happens elsewhere, like on mobile apps, in standalone web content, or during real-life training scenarios.

That’s where xAPI comes in. It expands the ability to track and analyze learning across platforms and environments. You get a richer view of how people learn and can use that data to drive continuous improvements. Many systems now work with both SCORM and xAPI, giving you the flexibility to use SCORM for structured, LMS-based training and xAPI for tracking learning everywhere else.

By using both, you gain greater compatibility, deeper insights, and more control over the full learning experience.

Expert insight:

“SCORM 1.2 is generally recommended only for small courses or when dealing with older LMS platforms. For most modern eLearning needs, SCORM 2004 is preferred, due to its enhanced capabilities, such as increased data storage capacity and more comprehensive tracking features.”

Bottom line? SCORM isn’t dead; it’s just sharing the stage.

The Role of Authoring Tools

For instructional designers and content authors, the right authoring tool takes care of the messy parts — versioning, packaging, and exports — so they can focus on what matters: the learning experience. It can make or break your SCORM workflow.

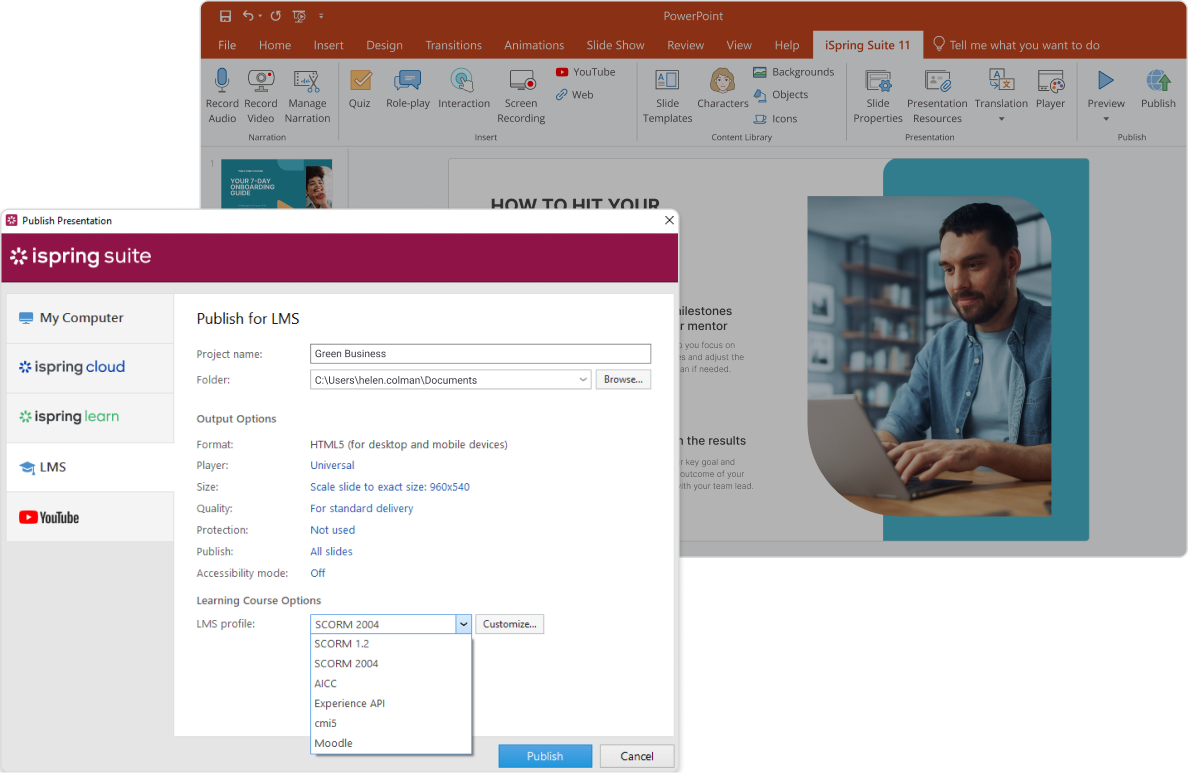

Popular tools like iSpring Suite, Articulate, and Adobe Captivate support both SCORM 1.2 and 2004, but they’re not all equal in how they handle the standards’ quirks. For example, SCORM 1.2 has tight limits on data size and status tracking. Some tools — like iSpring Suite — smooth over those rough edges behind the scenes, so you spend less time debugging and more time designing.

They also simplify SCORM implementation, allowing content authors to create a single content package with lessons, quizzes, and interactions — ready to export to SCORM 1.2, SCORM 2004, or even xAPI.

Expert insight:

“Modern authoring tools support exporting courses in both SCORM 1.2 and SCORM 2004 formats, allowing creators to choose the appropriate version based on their LMS requirements. These tools offer flexible settings to help circumvent standard limitations, such as optimizing suspend data size and supporting complex interactions. Many also integrate xAPI support, expanding tracking and analytics capabilities.

For example, iSpring Suite enables easy export to SCORM 1.2, SCORM 2004, and xAPI formats, facilitating compatibility with various LMS platforms.”

Bottom line: the right authoring tool won’t just publish your course. It will save you time, cut troubleshooting, and future-proof your content.

FAQ

Which is newer, SCORM 1.2 or 2004?

SCORM 2004 is the newer version, released in 2004, while SCORM 1.2 came out in 2001.

What is the difference between SCORM 1.2 and 2004?

SCORM 2004 adds sequencing, separate statuses, better reporting, and more data storage. SCORM 1.2 is simpler and more widely supported.

Which is better, SCORM 1.2 or 2004?

Expert insight:

“It depends on your course needs. SCORM 1.2 is best when you need simplicity and broad LMS support, especially for short, web-based training. But if you need sequencing, detailed tracking, or want to split completion and success statuses, then SCORM 2004 gives you more control.”

What is the difference between SCORM 2004, 3rd edition and 4th edition?

The 4th edition improved sequencing stability and added fixes, but both offer similar core features. The 3rd edition increased data limits significantly.

Is SCORM 1.2 still a good option for online training?

Yes, especially for simpler training courses or older LMS platforms. It’s one of the most common and stable formats even today.

How to convert SCORM 1.2 to SCORM 2004?

To convert SCORM 1.2 to SCORM 2004, use an authoring tool or SCORM packaging software like iSpring Suite that supports both standards, then update the manifest file and adjust sequencing, navigation, and metadata to align with SCORM 2004 specifications. Always test the converted package in a SCORM 2004-compliant LMS to ensure functionality.

Final Thoughts

In the end, there’s no universal answer to the SCORM 1.2 vs SCORM 2004 debate. It depends on your content, platform, and goals. Yes, version 1.2 still rules the eLearning world for a reason: it’s simple, stable, and works almost everywhere. But SCORM 2004 provides you with more control and smarter tracking.

Whichever you choose, the right tools make a huge difference. An authoring tool like iSpring Suite can help you get the most out of either standard, without headaches. Get a free 14-day trial now.